Journals

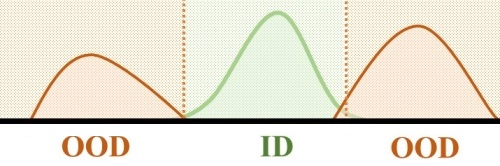

Out-of-Distribution Detection in Time-Series Domain: A Novel Seasonal Ratio Scoring Approach

T. Belkhouja, Y. Yan, and J. Doppa. ACM Transactions on Intelligent Systems and Technology (TIST), 2023.

Dynamic Time Warping based Adversarial Framework for Time-Series Domain

T. Belkhouja, Y. Yan, and J. Doppa. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2022.

Adversarial Framework with Certified Robustness for Time-Series Data via Statistical Features

T. Belkhouja, J. Doppa. Journal of Artificial Intelligence Research (JAIR), 2022.

Analyzing Deep Learning for Time-Series Data through Adversarial Lens in Mobile and IoT Applications.

T. Belkhouja, J. Doppa. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems (TCAD), 2020.

Biometric-based Authentication Scheme for Implantable Medical Devices during Emergency Situations.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. Future Generation Computer Systems - Elsevier, 2019.

Symmetric Encryption Relying on Chaotic Henon System for Secure Hardware-Friendly Wireless Communication of Implantable Medical Systems.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. Journal of Sensor and Actuator Networks, 2018.

Conference Papers

Adversarial Framework with Certified Robustness for Time-Series Data via Statistical Features.

T. Belkhouja and J. Doppa. 32nd International Joint Conference on Artificial Intelligence (IJCAI), 2023.

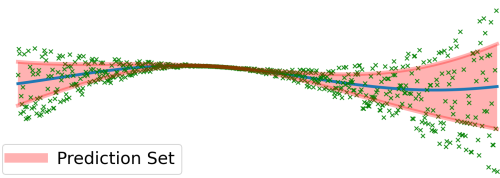

Probabilistically Robust Conformal Prediction.

S. Ghosh, Y. Shi, T. Belkhouja, Y. Yan, J. Doppa, and B. Jones 39th Uncertainty in Artificial Intelligence (UAI), 2023.

Improving Uncertainty Quantification of Deep Classifiers via Neighborhood Conformal Prediction: Novel Algorithm and Theoretical Analysis.

T. Belkhouja*, S. Ghosh*, Y. Yan, and J. Doppa. 37th AAAI Conference on Artificial Intelligence, 2023. (* denotes equal contribution)

Energy-Efficient Missing Data Recovery in Wearable Devices: A Novel Search-based Approach.

T. Belkhouja*, D. Hussein*, G. Bhat, and J. Doppa. ACM/IEEE International Symposium on Low Power Electronics and Design (ISLPED), 2023. (* denotes equal contribution)

Training Robust Deep Models for Time-Series Domain: Novel Algorithms and Theoretical Analysis.

T. Belkhouja, Y. Yan, and J. Doppa. 36th AAAI Conference on Artificial Intelligence, 2022.

Reliable Machine Learning for Wearable Activity Monitoring: Novel Algorithms and Theoretical Guarantees.

T. Belkhouja*, D. Hussein*, G. Bhat, and J. Doppa. 41st International Conference on Computer-Aided Design (ICCAD), 2022. (* denotes equal contribution)

Role-based Hierarchical Medical Data Encryption for Implantable Medical Devices

T. Belkhouja, S Sorour, M Hefeida. IEEE Global Communications Conference (GlobeCom), 2019.

Light-Weight Solution to Defend Implantable Medical Devices Against Man-In-The-Middle Attack.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. IEEE Global Communications Conference (GlobeCom), 2018.

Salt Generation for Hashing Schemes based on ECG readings for Emergency Access to Implantable Medical Devices.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. International Symposium on Networks, Computers and Communications (ISNCC), 2018.

Light-weight encryption of wireless communication for implantable medical devices using henon chaotic system.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. Wireless Networks and Mobile Communications International Conference (WINCOM), 2017.

New Plain-Text Authentication Secure Scheme for Implantable Medical Devices with Remote Control.

T. Belkhouja, X. Du, A. Mohamed, A.K. Al-Ali, M. Guizani. IEEE Global Communications Conference (GlobeCom), 2017.